T3THICS WEEK 9: Twitter's dead, long live Twitter

Elon Musk's hostile takeover of Twitter has succeeded, AI phrenology is like whack-a-mole, and should we all just Log Off?

T3THICS is Monika & Marta’s weekly roundup of tech ethics news (and olds) - and our quick thoughts on them.

New to T3THICS? Sign up here: t3thics.substack.com

If you can’t get enough of us here, follow Monika on Twitter and Marta on LinkedIn

Twitter is dead, long live Twitter

Yesterday, what seemed like the entire internet alternately held its breath and hyperventilated speculation as numerous outlets - NYT, Wall Street Journal, Reuters - reported that the Twitter Board was in final talks for a sale to Elon Musk. Then, midday, the news that the Board had accepted the offer (with nary a peep from leadership) dropped and Twitter exploded.

In lieu of recapping the deluge, here are a few of the takes we liked:

If Twitter is dead, should we all just Log Off?

The big question on everyone’s minds is: what will happen to Twitter? Pro-Musk fans and Musk-agnostics see a bright future prioritizing “free speech” on the platform. Most of the fixation stems from an erroneous belief that right/alt-right viewpoints are silenced - when in fact, research shows they’re more amplified on Twitter and elsewhere on Social Media than other political views. Most of the tech ethics academics paint a different, more worrying picture: a platform that removes protections against spam, bots, harassment and more to try to achieve freer speech will be one ultimately more hostile, unusable and, as it haemorrhages users, boring.

Everything before this moment already feels kind of distant, a past that’s receding faster than we can commit it to memory. Twitter might survive or it might not, but it will certainly mutate, maybe for the better, most likely for the worse. So the question is: do we all just Log Off? Join Mastodon or Discord or Telepath? Wait for the next big thing in Social Media? If you have any bright ideas, we’re all ears.

AI Phrenology Whack-a-Mole

In the latest set of assertions of the emotional powers of AI systems, a virtual start up states it can interpret students emotions in a virtual classroom set up. Not only does this wade into the controversy of whether AI systems should be reading emotions, but it layers additionally being used on minors.

Researchers who study AI harms were quick to push back:

The other big controversy this week was a thread by researcher Joshua Peterson describing a model trained to essentially encode the racism, sexism and xenophobia, which his team uncritically calls “inferences”.

Monika’s Things

In news that will probably go unnoticed in the flood this week, the EU’s Digital Services Act has been finalized. Some of the protections for E2EE that had been battlegrounds for privacy activists didn’t make it in and the list of dark patterns the act seeks to curb have been shortened, but it is still a welcome step in the direction of reigning in algorithmic harm from the big players

There have been more than a few discussions recently among top AI ethics researchers around the blind spots in the field of ML and AI research. This thread by expert Deb Raji looks at how ML hype causes researchers to focus too much on models to the detriment of their key ingredient that’s seen as way less sexy: data

We shouldn’t use robots for everything but we’re going to try anyway - now, in nursing homes

Relevant to Twitter and SoMe discussions in general: a new paper by Eric Goldman on content moderation approaches besides content/user removal

Crypto scams are now targeting the offline and elderly by posing as the Wall Street Journal

Good news on the crypto front: the EU is considering banning Bitcoin

Canada has updated its Algorithmic Impact Assessment (AIA)

What does building an intersectional feminist internet look like?

Snapchat has sustained a surprising amount of growth since its near-demise in 2016, and despite adjusting to Apple’s privacy measures (which have now famously hobbled Facebook’s earning potential)

A necessary and important paper by Veena Dubal on the ways in which labor law has failed gig workers who were and are essential in a pandemic

In better gig economy news: France convicts Deliveroo execs and hands out fine for circumventing labor legislation

AI researcher Sandra Wachter exposes how AI use in policing is one part injustice and one part snake oil:

‘Following a 10-month investigation into the use of advanced algorithmic technologies by UK police, including facial recognition and various crime “prediction” tools, the Lords Home Affairs and Justice Committee (HAJC) found that there was “much enthusiasm” about the use of AI systems from those in senior positions, but “we did not detect a corresponding commitment to any thorough evaluation of their efficacy”.’

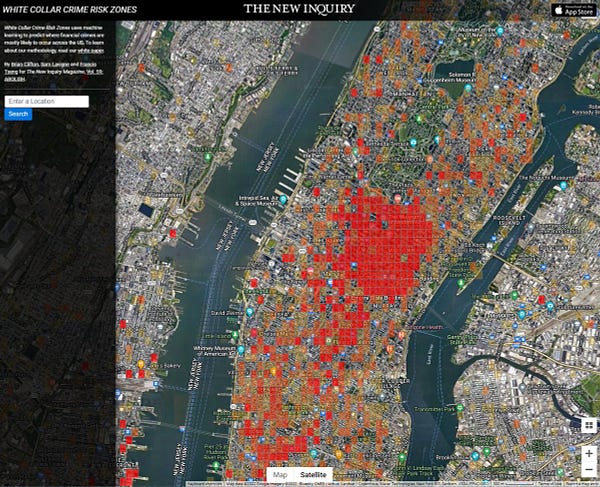

On the topic of policing: what if we approached white collar financial crime the same way we approach petty theft?

This piece by Jonathan Haidt on analogizing social media to the parable of the Tower of Babylon caused quite a stir

Even Barack Obama spoke out about the harms of disinformation on social media platforms this week

The Radical AI Podcast is back for another season

A great thread on the fairness crisis in AI

A man hooks up GPT3 to a microwave and anthropomorphizes it

FTC signals that algorithmic destruction may be the preferred tool to penalize companies using ill-gotten data

New research from the Neiman lab at McGill University shows how quickly private equity takeovers of local news create news deserts

Taylor Lorenz of the Washington Post has an in-depth expose of the woman behind the LibsofTikTok account that has been fueling hatred and harassment campaigns against LGBTQ+ people (and why it may get taken down due to trademark infringement)

Excellent reporting by Karen Hao on AI entrenching colonialism:

Maybe all AI can offer us is horrors: