T3THICS WEEK 17: Ghost in the Machine

AI-generated "Loab" is haunting the internet, Apple is leaning into fear-based marketing and the Dutch Government is set to abolish an intelligence tech oversight board.

T3THICS is a weekly roundup of the latest news & spicy conversations from the responsible tech space. Curation & analysis is brought to you by Monika Viktorova & Marta Janczarski, & SEO wizardry by Monika Kardyś.

New to T3THICS? Sign up here: t3thics.substack.com or follow us on Twitter!

Marta’s Links:

This past week Apple launched their updated models and products. However, what was most noteworthy was this years marketing approach. Gone are the years of excitement about features, new products and revolutionary new capabilities; the focus has shifted to the fear for your life without Apple products. The broadcast was filled with disaster scenarios and how having a range of Apple products makes you safer. Whether it’s linking society’s post-pandemic fear and risk-averse mood, or just trying to find a new edge in a mature market, we are definitely in a new era.

How safe is safe enough? A seemingly unanswerable question, yet one faced by UK regulators as of late. While autonomous vehicles don’t make the headlines as often these days, it’s been busy in that field. Regulators are grappling with trying to set the proper guidelines for integrating these vehicles within our existing systems, while also trying to take this opportunity to update how our transportation systems work. The UK government is working towards autonomous vehicles on UK roads by 2025. They have included a ‘safety ambition’, which they describe as having the same level of safety as a competent human driver. However, the level of safety expected of these vehicles by individuals and stakeholder groups indicates a bias of wanting a higher level of assurance of safety than that of current drivers. But what are the benchmarks? How do you quantify what is more safe than an average driver? All questions to be answered in the upcoming months and years.

We would be amiss if we didn’t include the current hiring freezes/layoffs in the tech industry. Most companies have instituted a hiring freeze, rescinded offers or have gone ahead with layoffs over the summer. With the fear of a looming recession, the possible ‘bite-back’ against the great resignation, and investors' ever-increasing demands for continuous record growth has created an environment of recruiting austerity. The unemployment rate is still low, so either we haven’t dipped that much into the layoffs, or the figures have yet to be updated to reflect the instability in the job market.

In case the major telecom providers needed a helping hand in securing more of their market, Apple provided with their latest iPhones. With the announcement came news that the phones will no longer have a physical SIM card tray, just eSIM capabilities in the US. While this doesn’t pose any difficulties with those subscribed with the major telecom providers, this of course limits the usability of these phones for other smaller providers, or even the ability to change out SIM cards while traveling abroad. While yes, the general prediction is a full transition from physical SIM cards to eSIMs, one would expect a transitionary period with both capabilities.

With the European gas and energy crisis, some welcome good news this week for electricity: “An international team led by UCC quantum physicist Prof Séamus Davis [with partnership with the University of Oxford] has uncovered the atomic mechanism behind room-temperature superconductors, potentially paving the way for super-efficient electrical power.” This is a critical problem to solve, as currently superconductors work in extremely cold environments that are difficult to maintain. While this is still in an academic research application, it’s a welcome advancement that will hopefully translate into our everyday life soon.

The threat of personal liability when it comes to breaches in privacy and compliance is not unheard of, but one could say it is rare in tech. For example, HIPAA in particular has included personal liability for "knowing acts" since 2009, which includes personal fines up to $250,000, and imprisonment for up to 10 years. It has been largely unenforced to date but exists. Completing annual training was the measuring stick for "knowing" vs. "not-knowing" what your accountabilities were - and why sanction policies were applied in Health for non-compliance with training mandates. This will be put to the test as an ex-Uber executive goes to trial for criminal charges of how he handled the 2016 security breach. Could this open the precedent for future legal action? This trial will be closely watched to see what implications it could have for the future.

Monika’s Links:

Let’s check back in with the battle for Twitter’s soul, shall we? If you recall, we covered Elon Musk’s hostile takeover attempt back in Week 8. Almost since he announced the purchase, he’s been fighting to be let out of his tender offer. Most recently, he was told by a judge that he can’t delay the trial, although he’ll be allowed to feature a whistleblower who will allegedly support his claim that Twitter’s user numbers are off. (A reminder that this isn’t the only legal action he’s involved in.)

Apropos Queen Elizabeth’s death and the fake viral screenshots that circulated prior to the official announcement, here’s a quick thread on how to spot a fake screenshot.

Facebook doesn’t know where your data is and, apparently, it can’t find out. In a recent court hearing, Facebook engineers have admitted that they can’t fully map where your data ends up in the organization… which also means they’re admitting non-compliance with GDPR. Your move, regulators!

Cool new incubator alert: Omidyar Network has announced a $8M “The Tech We Want” fund to build ethical tech with a diverse group of 15 “tech luminaries” including big names like Dr. Safiya Noble and Ellen Pao.

Just in time for back-to-school, check out the recently published The Ethics of Artificial Intelligence in Education: Practices, Challenges and Debates.

Amazon was apparently forcing employees to Covid test their colleagues without PPE, allegedly causing illness and in the case of at least one employee, a Covid-related death.

Two separate conversations echoing this week about the role AI should play in government: Emily M. Bender asks what protections we have in place against any tech harm coming from machine learning in public services. Elsewhere, Bert Hubert, a technical risk analysis specialist and member of the Dutch intelligence and security services oversight board, “Toetsingscommissie Inzet Bevoegdheden” or TIB, has resigned so that he can publicly speak about his grave concerns regarding a new law set to pass in parliament. This law will abolish the oversight board and gut human oversight of algorithmic surveillance of bulk intercepted data.

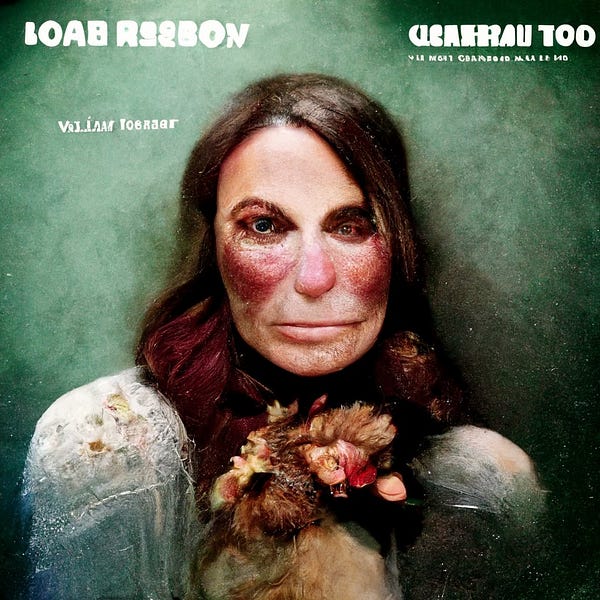

Bravely forging ahead with spooky season: artist Supercomposite detailed a cool thread about an AI-generated woman that kept showing up in their images. The story took a life of its own, being dubbed a “demon haunting the internet” - a good explainer for why that’s a little melodramatic from our favorite TikToker Dr. Casey Fiesler. And for our fans of oldschool psychology: a thread that looks at how AI refracts the horrors of our (training data) id to produce images like Loab